Baby Dragon Hatchling

The lizard brain we are growing for 8gent. Phase 2b. Best val_loss 0.885.

Baby Dragon Hatchling is an open-weight language model, trained from scratch on an M2 Max, paper-faithful to Pathway's BDH architecture (arXiv:2509.26507). Apache 2.0, byte-level vocab, no closed-weight teachers. Four training phases on a curated corpus of sessions, code, docs, and prose - each phase measured against a held-out validation set. Phase 2b closed at a best val_loss of 0.885.

Role in the harness

Today: he is a research artifact. He produces structurally valid session JSON, conditions on a <<source:path>> style switch as a soft mode signal, and has measurably stopped memorising. He still cannot route. He still cannot speak coherent English. Both are next on the bench.

Intended role: 8gent routes tasks across a large surface area - research, code, communication, memory, multi-step plans. The training goal is not general intelligence but sharper routing: which tool, which persona, which plan structure fits a given input. Once an eval harness scores his decisions against a labelled gold set, BDH starts driving routing in shadow mode behind the production stack via the Throne integration (PRD W0-W3).

The path is: artifact today, shadow-mode tomorrow, default-on routing when his evals beat the heuristic baseline by a measurable margin. Never on by default until the gates pass.

The hatchling framing is intentional. This is an early-stage model, still learning, still accumulating training examples and eval data. The dart-throw animation on this page maps throw accuracy to val_loss: lower loss means tighter clusters around the bullseye. Once the eval harness ships, real scores flow through and the dragon earns his aim in real time.

Training history

Four runs across two variables. Source: PHASE-2-SYNTHESIS.md (PR 2016).

Loss curves

Best validation loss per phase

Memorisation regression

| Phase | Result | Note |

|---|---|---|

| Phase 0 | FAIL | anomalous - rule-based corpus has near-zero entropy |

| Phase 1 | FAIL | Phase 0 carryover dominated; verbatim regurgitation persisted |

| Phase 2a | PASS | carryover dropped; out-of-distribution prompts produce new content |

| Phase 2b | PASS | produces TypeScript-style interface declarations on out-of-distribution prompts |

| Phase 3c | PASS | corpus shape change beats both heterogeneous baselines on byte loss |

Phase 2b corpus mix

- 67%code575 files

- 14%sessions119 files

- 8%docs69 files

- 7%world40 files

- 4%blog14 files

Slope ratio 3:1 in favour of corpus over capacity. 4x corpus dropped val by 16%; 2x params dropped val by 5%.

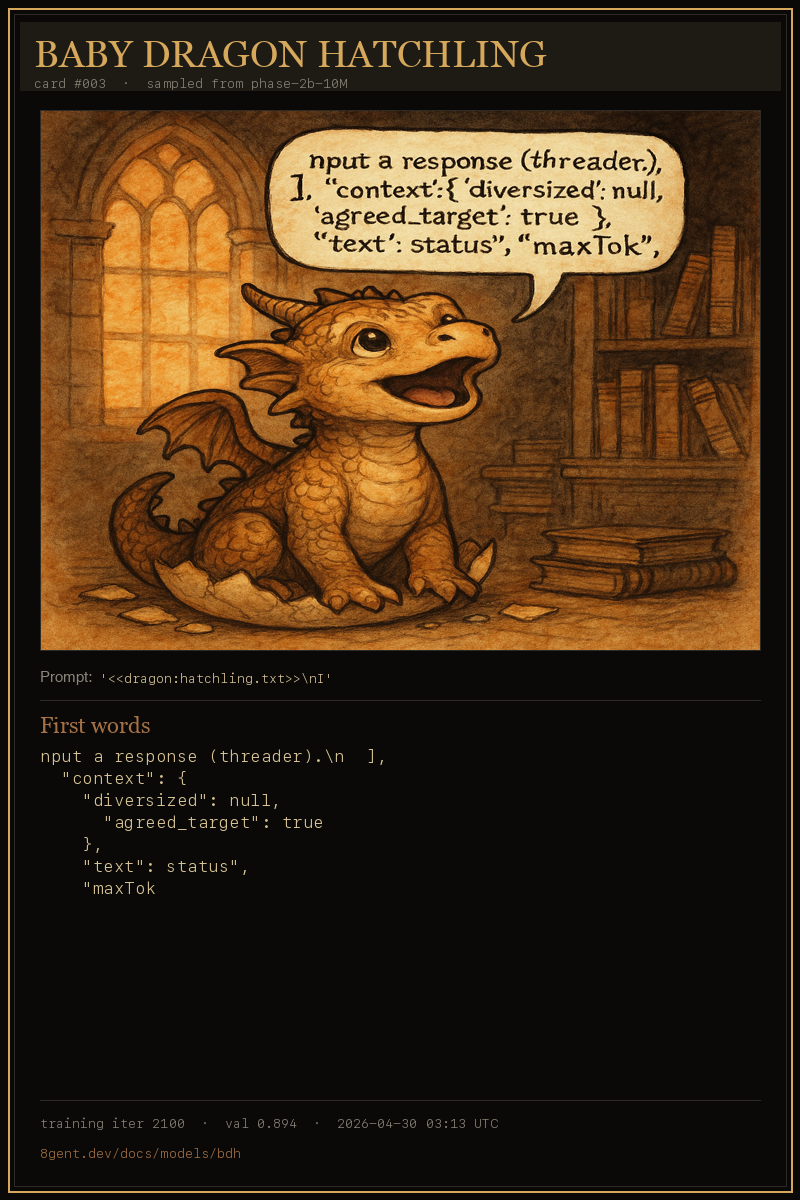

The Dragon's Diary

Trading cards captured during Phase 3c training. Each card samples his actual byte-level output and renders an image vibe-keyed to those exact words. As coherence improves across phases, the words get less noise and the images get more cohesive.

Sampled from Phase 2b (10M heterogeneous) during Phase 3c training. Future runs will sample from each new checkpoint so the diary reflects coherence across phases.